yet2 Insights: The Future of Artificial Intelligence

While the term “artificial intelligence” itself may be new, ever since humankind started building devices that could move on their own, we’ve imagined the facsimile of life. Early examples of conceptual automata date back to Ancient Greece. Hephaestus, the god of invention and technology created the bronze guardian, Talos, and Pandora, who was sent to earth by the gods to infiltrate humanity and sew evil. Centuries passed, and while the complexity and elegance of mechanical automata grows, only the development of modern computing allows the possibilities dreamed before. Is it all hype? Certainly we find a good amount of that, as with any new tech, but it’s also changing how we think about and solve problems.

Across industries, we see a strong focus on reducing the friction between human and machine, or the “hand off” of tasks. Narrow forms of AI are used to enhance and support human activity rather than handle the whole task. In the military this includes the Integrated Visual Augmentation System (IVAS) which gives enhanced situational awareness. In healthcare, researchers are testing AI software that draws doctors’ attention to potential concerns, like polyps, during routine scans. The Exploration Medical Integrated Product Team (XMIPT) at NASA is looking for systems to automate medical inventory management on Artemis space missions.

Artificial Intelligence also brings fresh areas to explore and innovate. Pragmatically speaking, how do humans find meaning from the immense quantities of data we now collect? How can we clearly see and quickly implement learnings? New AI data-mining applications span industries; finding and creating designer molecules for pharmacology by cross examining journals, and virtualization (ResoluteAI, Citrine, Kebotix, etc); accelerating the rate of manufacturing, or increasing flexibility of output with Industry 4.0; Balancing demand volatility with supply, and rerouting disrupted shipping routes. AI also can improve public safety by providing anomaly detection for air travel management, and hydroelectric plants. The Public Safety Communications Research division of National Institute of Standards and Technology continues to search for open-source resources for AI-based Analytic tools specifically to support real-time analysis of streaming data from public safety communications and data streams that public safety officials monitor.

The most of the expansive goals require developing a degree of standardization and integration beyond current capabilities. We must establish how these algorithms structure, find, and ingest data. GO FAIR & OneDM attempt this respectively by developing accessibility and data structure guidelines.

On the other side of this dilemma, how can we trust recommendations given by AI? Explainable AI allows developers to trace the decision process backwards and make sense of the reasoning (e.g. Deep Blue). This gives transparency around how decisions are made. While we may achieve fast and pragmatically useful results with black box AI (e.g. Deep Mind/AlphaGo), the full decision-making process remains obscured and it becomes hard to know whether these are results we can depend on.

This becomes especially critical in areas like law enforcement, military, and first responder deployments. Proponents claim that AI/ML algorithms allow for true neutrality, since they don’t have human biases. However, we see in application, the creation of the machine in man’s image and ML algorithms can display or even enhance current human biases. Interestingly, using ML algorithms to create synthetic data sets can provide more robust training data than naturally generated data sets.

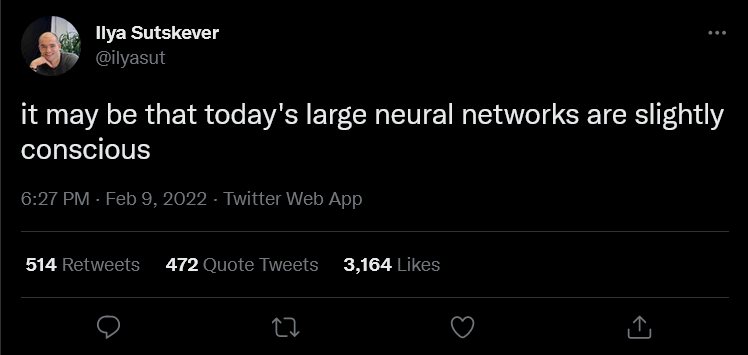

In 2019, DARPA issued four contracts to SRI International, Raytheon BBN Technologies, Teledyne Scientific & Imaging and BAE Systems to develop competency-awareness machine learning so they can self-assess task competency. Self-awareness in machines represents a singularity within the field of artificial intelligence, the emergence of general or possibly even super AI. Ilya Suskever, cofounder of OpenAI, believes that some of the larger neural networks (Such as GPT-3 & Dall-E-2) may be becoming conscious already, though making that determination with certainty proves challenging. Our own understanding of consciousness is deeply limited. It is only a decade ago (2012) that scientists came to the consensus that “non-human animals, including all mammals and birds, and many other creatures, including octopuses’ possess neurological substrates complex enough to support conscious experiences.”

Each of these examples are created with the intent to do good. And yet, for every altruistic application of powerful tools we also find malicious uses; social media bots used to sway elections, malware that self-learns and adapts to our security systems. Much like Talos and Pandora, we find these tools harnessed to both enhance our strengths, and support our weaknesses; equally they can exploit. We find limits only at the boundary of our imagination and ethics.

These are just a few of our learnings from more than twenty years in the tech scouting business. If you’d like to learn more about a particular industry, or have a pressing technological question, reach out to our Director, Marcus Widell, and we’ll find a way to make our expertise work for you.

Related Tech Needs

Seeking: Applied R & D of AI and Machine Learning Technologies

Seeking: AI and Machine Learning Platform Technologies

USBR Seeking: AI Anomaly Detection for Hydroelectric Plants

Seeking: Open-Source Resources for AI-Based Analytic Tools

NASA Seeking: Automated Medical Inventory System

Related Posts

COVID-19 and Supply Chain: Using AI and 3D Printing to Help Meet Surging Demands

Photo Credit: Gerd Altmann from Pixabay